This week I sat down with two Grade 7 students to conference on a math performance task, and we did something I want to make a habit of: we all read the feedback together and each of us annotated the documents as we went — their observations alongside mine.

The task and the evaluation were both AI-generated — a rooftop garden design problem spanning fractions, algebra, data analysis, and budgeting, aligned to all four categories of the Ontario Growing Success Achievement Chart. The feedback was detailed and criterion-referenced, with specific commentary for each question and conference prompts prepared in advance. Our focus was on that feedback loop: what did the assessment reveal, what did it miss, and what did the students themselves have to say about it.

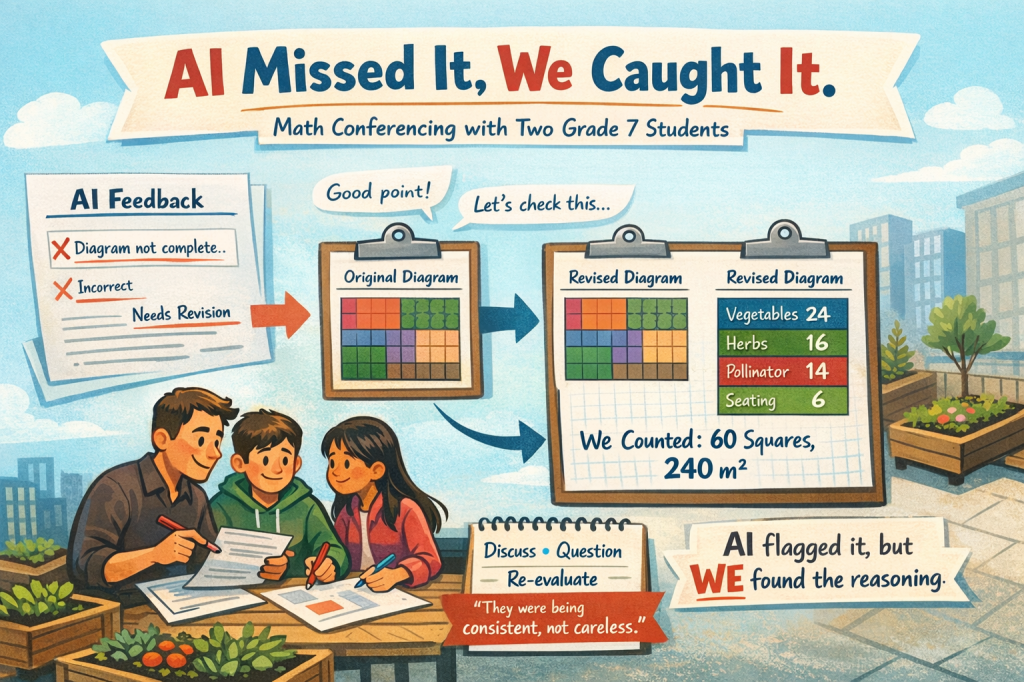

As the three of us read through the documents together and something stopped us. The students had originally submitted a colour-coded grid diagram, but the AI had assessed the PDF without picking up the colour, effectively missing the diagram entirely and assuming it hadn’t been completed. Reading through the feedback together, the students flagged it.

We looked at the original, counted the squares section by section, and it became clear that every area matched their proposed redesign from Part B exactly — vegetables at 24 squares, herbs at 16, pollinator at 14, seating at 6, totalling 60 squares and 240 m² without a remainder. To produce that, you have to know your target area, divide by 4 m² per square, then design a compact rectangular region with that exact integer count, and do it for all four sections at once. That is spatial and proportional reasoning applied deliberately to a real constraint.

So we did what made sense: we redid the diagram with proper labels and resubmitted it for re-evaluation. The AI had flagged their use of the redesigned areas throughout the rest of the task as a cascading error — with the labelled diagram now in front of us, the re-evaluation told a different story.

Their answer was essentially: we proposed a new garden design in Part B, so we applied that new design everywhere — to the tray calculation, the budget, the diagram, all of it. If the plan changed, the whole plan should change.

The AI had read that as an error — careless copying of the wrong values. But when you heard them explain it, the logic held up. They weren’t being careless. They were being consistent. They made a design decision and followed it through. That is a different thing entirely, and it took the three of us together to surface it. That reasoning is coherent. It also cost them marks because the task instructions pointed back to the original areas, and we talked through exactly why, and what they’d watch for next time. Then they wrote their own feedback into the document alongside mine — what made sense, what they’d do differently, what they knew they’d got right.

The AI produced feedback precise enough to be worth conferencing over, but it was the three of us together — reading, questioning, counting squares — that turned the assessment into actual learning. One of the students explained their design decision and she was right about the logic, even though the task had different expectations. No model caught that. We did.

AI in the classroom isn’t about replacing the teacher. It’s about raising the floor of what’s possible so the ceiling — the conversation, the moment of genuine inquiry, the student explaining their reasoning to an adult who is actually listening — can happen more often, with better material underneath it.

What the AI Did Well — and What Only We Could Do

What AI contributed

What only we could do

The ceiling is the conversation. AI raised the floor — better material, more detailed feedback, faster turnaround. But the learning happened when three people sat in a room, read the document together, and one student said something that made the teacher pause. No model catches that. That’s the point.

AI-Assisted Assessment & Feedback Conference

AI builds the floor

Student work

AI raises the detail

The conversation the document made possible

Student agency

Assessment as learning · Growing Success

Rich Baxter is a Grade 7 teacher at John McCrae Public School (TDSB), Founder & CEO of Innovation in Education, and creator of the Lern2ern platform. He is shortlisted for the 2025 QS Reimagine Education Award in Nurturing Employability.

#AssessmentAsLearning #GrowingSuccess #AIinEducation #OntarioEducation #HighImpactPractices #StudentVoice #FeedbackConference #TeacherLeadership #EdTech #HumanPlusAI